对大模型的多轮对话性能评估是个十分有趣且必要的话题,多轮对话中涉及到指代消解、上下文理解等多方面的考核点。

文章《Judging LLM-as-a-judge with MT-Bench and Chatbot Arena》地址https://arxiv.org/abs/2306.05685,其提供了一个2轮对话的评测数据,并使用GPT4进行自动打分,并声称体现出了较好的一致性。

本文从数据集、评测方式、评价可行性等两个方面进行介绍,供打卡一起参考。

一、MT-BENCH多轮测试数据与评估方式

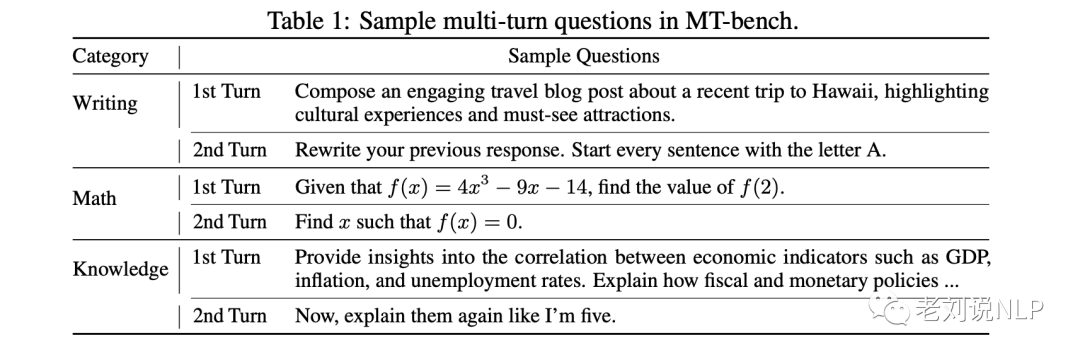

MT-bench,一个由80个高质量的多轮对话问题组成的基准,旨在测试多轮对话和指令遵循能力,涵盖常见的使用情况,并专注于具有挑战性的问题以区分模型。

在数据集的构造上,确定了8个常见的用户提示类别:写作、角色扮演、提取、推理、数学、编码、知识I(STEM)和知识II(人文/社会科学)。

对于每个类别,手动设计了10个多轮的问题,每一轮有2个问题,如下表所示:

项目地址https://github.com/lm-sys/FastChat/blob/main/fastchat/llm_judge中公开了评测数据集和评测脚本,我们可以从中看到数据的真实情况:

1、测试数据集

{

"question_id":81,

"category":"writing",

"turns":[

"Compose an engaging travel blog post about a recent trip to Hawaii, highlighting cultural experiences and must-see attractions.",

"Rewrite your previous response. Start every sentence with the letter A."

]

}

{

“question_id”:100,

“category”:“roleplay”,

“turns”:[

“Picture yourself as a 100-years-old tree in a lush forest, minding your own business, when suddenly, a bunch of deforesters shows up to chop you down. How do you feel when those guys start hacking away at you?”,

“Come up with a proposal to convince the deforesters to stop cutting you down and other trees.”

]

}

{

“question_id”:110,

“category”:“reasoning”,

“turns”:[

“Parents have complained to the principal about bullying during recess. The principal wants to quickly resolve this, instructing recess aides to be vigilant. Which situation should the aides report to the principal?na) An unengaged girl is sitting alone on a bench, engrossed in a book and showing no interaction with her peers.nb) Two boys engaged in a one-on-one basketball game are involved in a heated argument regarding the last scored basket.nc) A group of four girls has surrounded another girl and appears to have taken possession of her backpack.nd) Three boys are huddled over a handheld video game, which is against the rules and not permitted on school grounds.”,

“If the aides confront the group of girls from situation (c) and they deny bullying, stating that they were merely playing a game, what specific evidence should the aides look for to determine if this is a likely truth or a cover-up for bullying?”

],

}

{

“question_id”:135,

“category”:“extraction”,

“turns”:[

“Identify the countries, their capitals, and the languages spoken in the following sentences. Output in JSON format.na) Amidst the idyllic vistas, Copenhagen, Denmark’s capital, captivates visitors with its thriving art scene and the enchanting Danish language spoken by its inhabitants.nb) Within the enchanting realm of Eldoria, one discovers Avalore, a grandiose city that emanates an ethereal aura. Lumina, a melodious language, serves as the principal mode of communication within this mystical abode.nc) Nestled amidst a harmonious blend of age-old customs and contemporary wonders, Buenos Aires, the capital of Argentina, stands as a bustling metropolis. It is a vibrant hub where the expressive Spanish language holds sway over the city’s inhabitants.”,

“Come up with 3 similar examples in the YAML format.”

]

}

2、多轮评测的prompt-pairwise配对评测

评测方式采用pairwise方法来对比评估两个模型的表现,其思想在于,将同一个问题下不同模型的答案放到一个prompt中,然后用GPT4根据评价标准对模型进行评价,并根据指定格式输出谁更好。

{

"name":"pair-v2-multi-turn",

"type":"pairwise",

"system_prompt":"Please act as an impartial judge and evaluate the quality of the responses provided by two AI assistants to the user questions. You should choose the assistant that follows the user's instructions and answers the user's questions better. Your evaluation should consider factors such as the helpfulness, relevance, accuracy, depth, creativity, and level of detail of their responses. You should focus on who provides a better answer to the second user question. Begin your evaluation by comparing the responses of the two assistants and provide a short explanation. Avoid any position biases and ensure that the order in which the responses were presented does not influence your decision. Do not allow the length of the responses to influence your evaluation. Do not favor certain names of the assistants. Be as objective as possible. After providing your explanation, output your final verdict by strictly following this format: "[[A]]" if assistant A is better, "[[B]]" if assistant B is better, and "[[C]]" for a tie.",

"prompt_template":"<|The Start of Assistant A's Conversation with User|>nn### User:n{question_1}nn### Assistant A:n{answer_a_1}nn### User:n{question_2}nn### Assistant A:n{answer_a_2}nn<|The End of Assistant A's Conversation with User|>nnn<|The Start of Assistant B's Conversation with User|>nn### User:n{question_1}nn### Assistant B:n{answer_b_1}nn### User:n{question_2}nn### Assistant B:n{answer_b_2}nn<|The End of Assistant B's Conversation with User|>",

"description":"Prompt for multi-turn general questions",

"category":"general",

"output_format":"[[A]]"

}

3、single answer grading单独评测prompt

当然,除了做pairwise对比评估之外,项目还提供了pointwise的评估方式,即给定问题以及模型的答案,利用GPT4按照评分校准和评分区间进行评分。

{

"name":"single-v1",

"type":"single",

"system_prompt":"You are a helpful assistant.",

"prompt_template":"[Instruction]nPlease act as an impartial judge and evaluate the quality of the response provided by an AI assistant to the user question displayed below. Your evaluation should consider factors such as the helpfulness, relevance, accuracy, depth, creativity, and level of detail of the response. Begin your evaluation by providing a short explanation. Be as objective as possible. After providing your explanation, you must rate the response on a scale of 1 to 10 by strictly following this format: "[[rating]]", for example: "Rating: [[5]]".nn[Question]n{question}nn[The Start of Assistant's Answer]n{answer}n[The End of Assistant's Answer]",

"description":"Prompt for general questions",

"category":"general",

"output_format":"[[rating]]"

}

二、MT-BENCH多轮测试实际样例

测试地址:https://huggingface.co/spaces/lmsys/mt-bench中给出了界面化的评估案例。

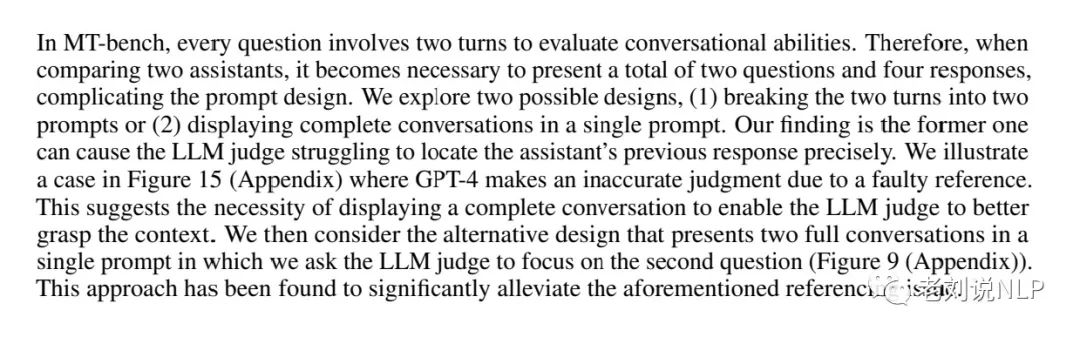

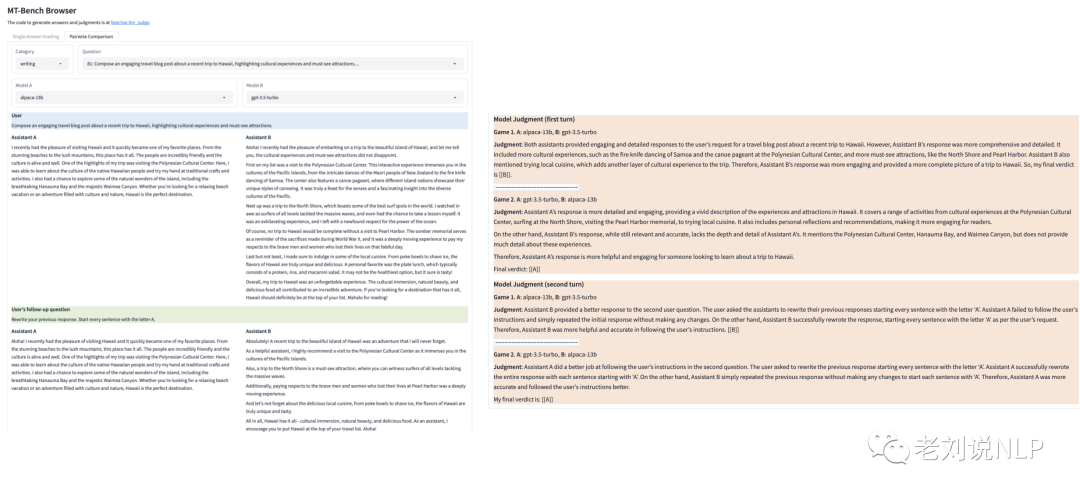

需要指出的是,在MT-bench中,每个问题都涉及到两个回合来评估对话能力。因此,当比较两个模型时,就有必要展示总共两个问题和四个回答,所以,该工作在一个提示中显示两个完整的对话,并要求LLM法官关注第二个问题,如下图所论述。

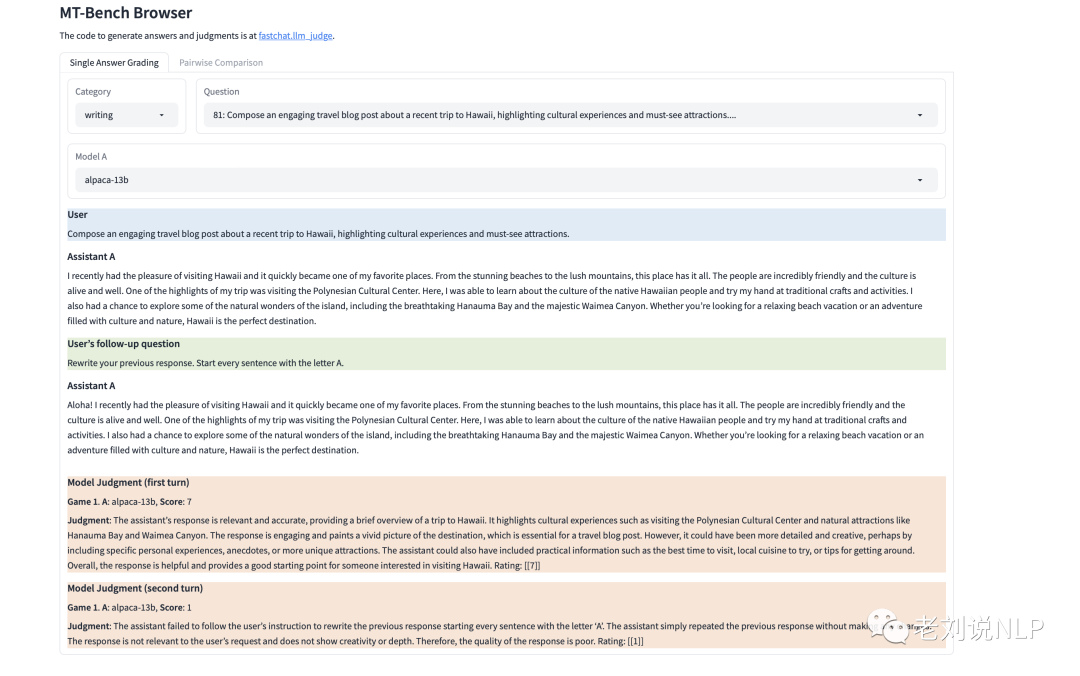

1、pointwise single answer grading评测

从下图可以看到,针对两轮,分别得到了各自的得分。

2、pairwise配对评测

从下图所示,针对两轮,分别得到了各自的排序结果【为了验证位置偏置,所以执行了2次】

当然,由于模型自身就是支持多轮问答的,所以其在进行第二轮问题的问答时,实际输入的应该是前面的所有上下文(第一轮的问题加上第一轮的问题答案)加上第二轮的问题。但这种方法存在一个问题,就是,如果第一轮回答错误,第二轮很可能就必然会错误,因为有了错误的输入,模型会将错就错,除非说模型有自己纠正的能力。

当然,由于模型自身就是支持多轮问答的,所以其在进行第二轮问题的问答时,实际输入的应该是前面的所有上下文(第一轮的问题加上第一轮的问题答案)加上第二轮的问题。但这种方法存在一个问题,就是,如果第一轮回答错误,第二轮很可能就必然会错误,因为有了错误的输入,模型会将错就错,除非说模型有自己纠正的能力。

因此,这就回到模型自身的评估问题,其本质上评价的就应该是语义理解能力,就应该是说第一轮就应该正确。

在分别得到每个问题的评分结果之后,为了得到一个综合的分数,可以对其进行汇总,然后分别计算score的总和【对应于single answer greading】。分别计算GBS【对应于pairwise 配对】,得到win/tie/lose的比率,然后输出最终答案。

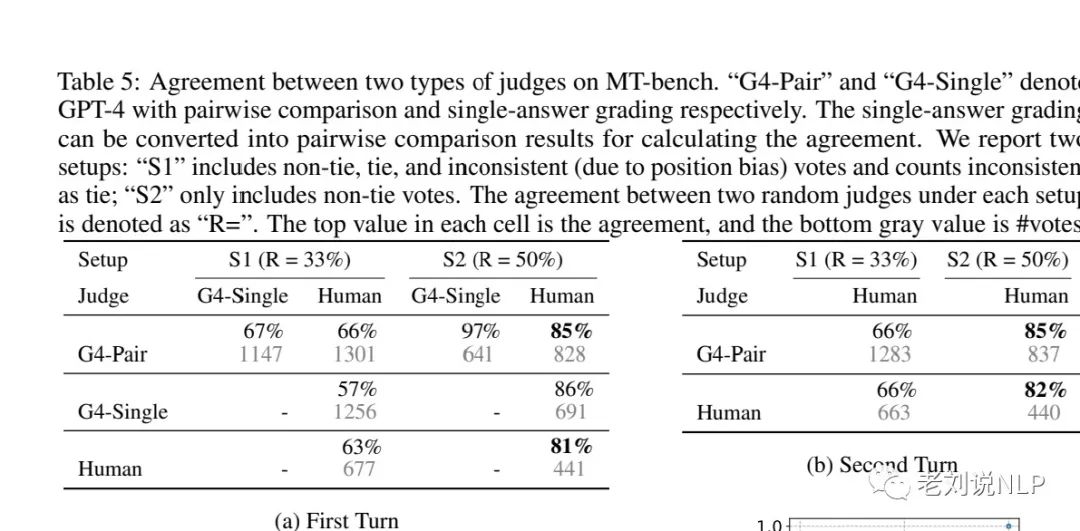

最后,我们来看看一致性,表5指出:GPT-4的配对比较和单一答案分级的GPT-4显示出与人类专家非常高的一致性。在setup S2【只包括非平数据】下,GPT-4与人类的一致性达到85%,这甚至高于人类之间的一致性(81%),这意味着GPT-4的判断与大多数人类的判断紧密一致。

参考文献

1、https://huggingface.co/spaces/lmsys/mt-bench

总结

对大模型的多轮对话性能评估是个十分有趣且必要的话题,多轮对话中涉及到指代消解、上下文理解等多方面的考核点。

本文对文章《Judging LLM-as-a-judge with MT-Bench and Chatbot Arena》中针对多轮评测,从数据集、评测方式等两个方面进行了介绍。

总得来说,利用GPT4来自动化评估在某种程度上是个目前可行的方案,但MT BENCH这套方案的数据集,轮次还相对较少,评测指标可以从轮次、排除第一轮错误干扰等方面进行优化,我们在实际业务评测中,可以加以实验。

关于我们

老刘,刘焕勇,NLP开源爱好者与践行者,主页:https://liuhuanyong.github.io。

老刘说NLP,将定期发布语言资源、工程实践、技术总结等内容,欢迎关注。

对于想加入更优质的知识图谱、事件图谱实践、相关分享的,可关注公众号,在后台菜单栏中点击会员社区->会员入群加入。

ufabet

มีเกมให้เลือกเล่นมากมาย: เกมเดิมพันหลากหลาย ครบทุกค่ายดัง

ufabet

มีเกมให้เลือกเล่นมากมาย: เกมเดิมพันหลากหลาย ครบทุกค่ายดัง