今天是2023年12月26日,星期五,北京,天气晴。

今天我们再来看看长文本性能如何进行评测,主要讲讲大海捞针needle in a haystack评测以及ppl长文本评测。

其实现细节和设计思路都很有新意,总结出来,供大家一起参考。

一、从Qwen-72B的长文本评测看大海捞针评测

在https://huggingface.co/Qwen/Qwen-72B-Chat中,可以看到该项目在大模型长文本评测上所采用的方案。

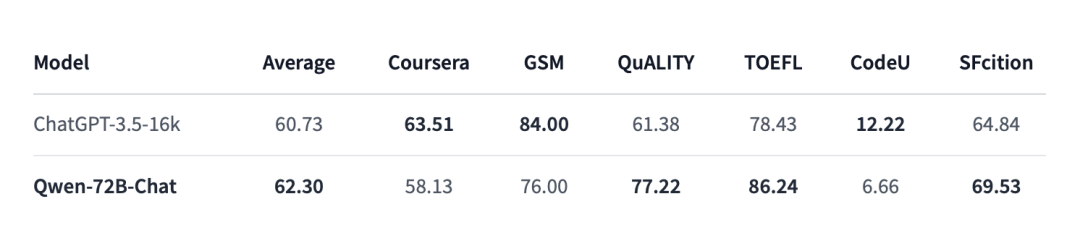

1、l-eval评测

一个是在l-eval上进行评测,L-Eval(L-Eval: Instituting Standardized Evaluation for Long Context Language Models,https://arxiv.org/abs/2307.11088),是一个长文本评估基准,包含20个子任务、508个长文档和2,000多个人类标记的问答对,涵盖不同的问题风格、领域和输入长度(3k~200k词组),这个我们在昨天的文章中有过介绍。

不过,其只针对close-end任务进行评估。

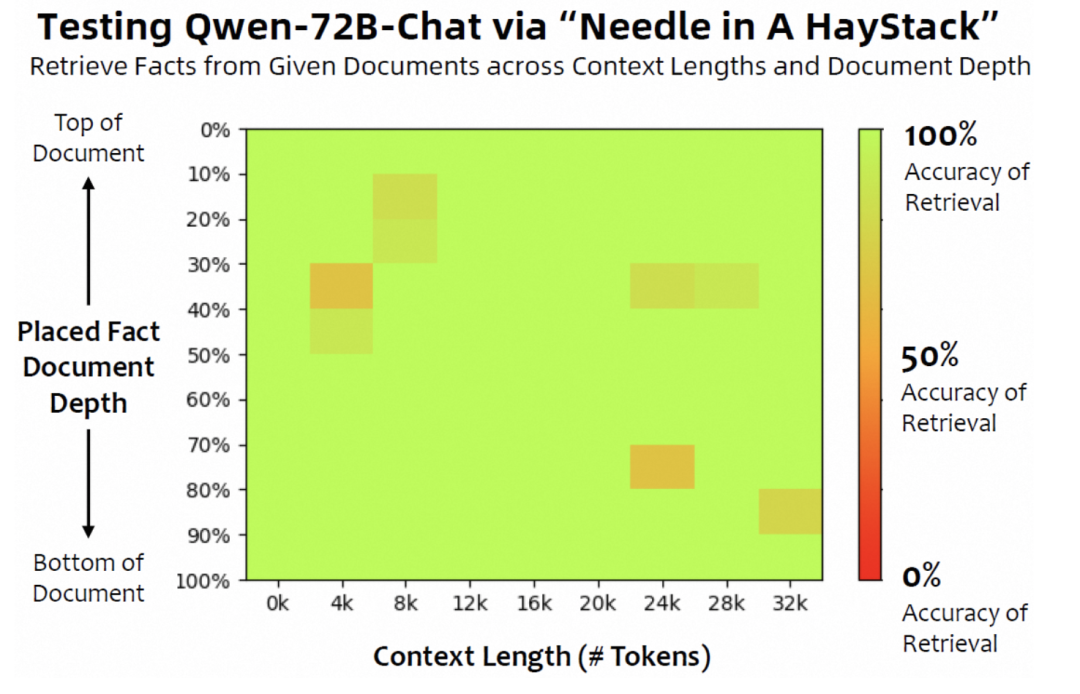

2、needle in a haystack评测

另一个是在大海捞针(needle in a haystack)(https://twitter.com/GregKamradt/status/1727018183608193393)上进行测试,其测试模型在不同长度的输入下,是否能检索到文章不同位置的信息,例如QWEN72B的能力:

这个任务,看起来很像longbench中的合成任务,例如:

PassageRetrieval-en任务:给定30个英文维基的段落,判断给定的摘要属于哪个段落

PassageCount任务:判断给定的若干的段落中不重复的段落一共有几个

PassageRetrieval-zh任务:给定若干个出自C4数据集的中文段落,判断给定的摘要属于哪个段落。

比较有趣的是,大海捞针这个任务是如何进行的,其开放代码放在:https://github.com/gkamradt/LLMTest_NeedleInAHaystack。

文章(https://mp.weixin.qq.com/s/IC5-FGLVHzHHYqH6x-aNng)对该任务做了较清晰的解释:

“在文本语料中藏入一个与文本语料不相关的句子(可以想象是在整本《西游记》里放入一句只会在《红楼梦》里出现的话),然后看大模型能不能通过自然语言提问的方式(Prompt)把这句话准确地提取出来。

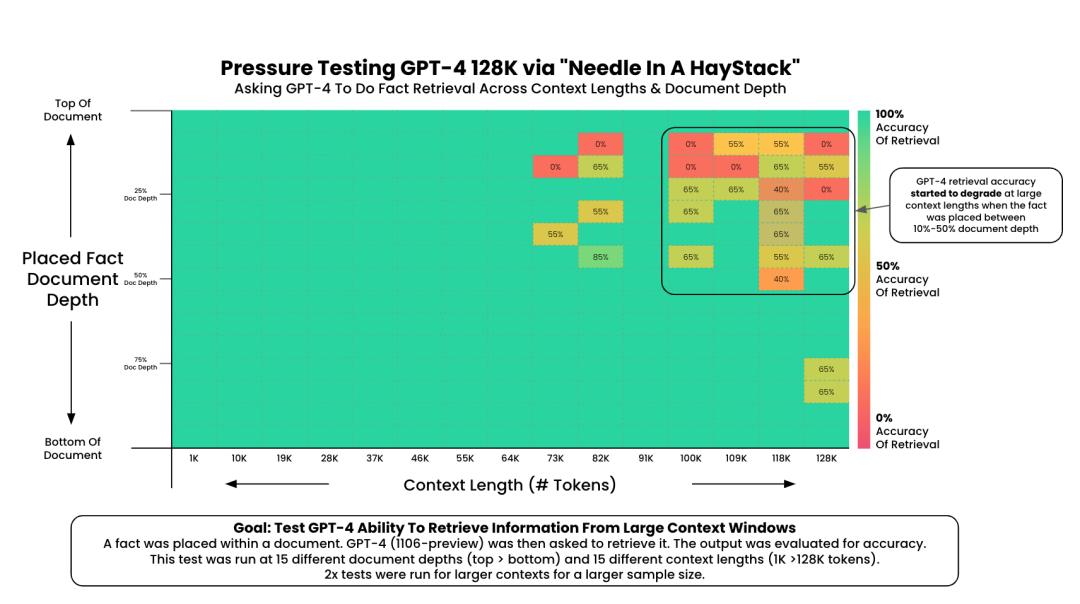

Greg Kamradt把藏起来的那句话(也就是大海捞针的“针”)分别放到了文本语料(也就是大海捞针的“大海”)从前到后的15处不同位置,然后针对从1K到128K(200K)等量分布的15种不同长度的语料进行了225 次(15×15)实验。

Greg Kamradt 的“大海捞针”实验简述:

大海”:

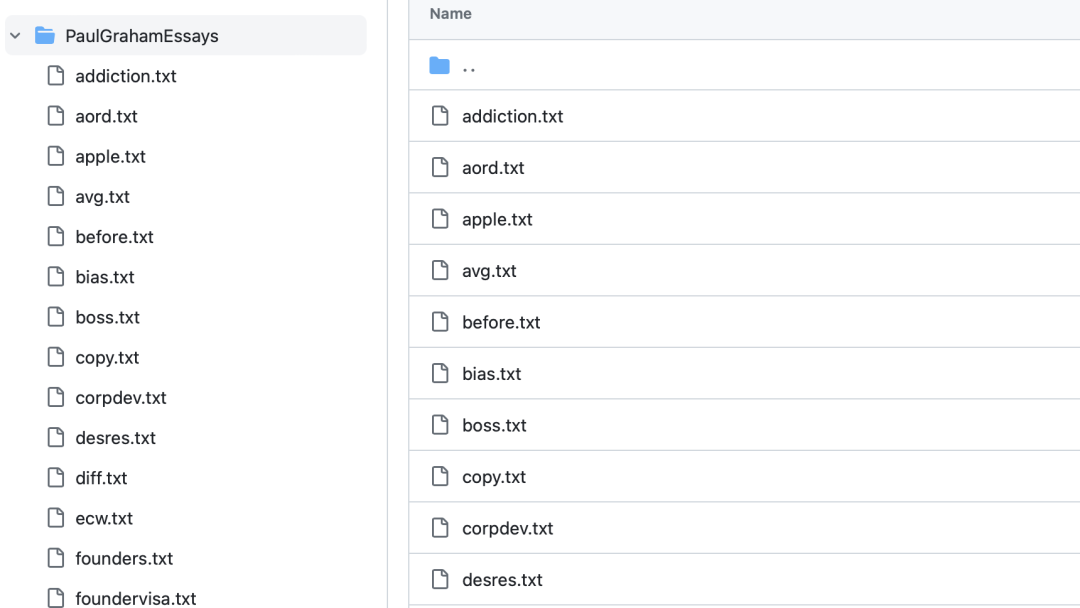

Paul Graham的文章合集作为语料

这个文本在PaulGrahamEssays(https://github.com/gkamradt/LLMTest_NeedleInAHaystack/blob/main/PaulGrahamEssays/founders.txt)中

“针”:

“The best thing to do in San Francisco is eat a sandwich and sit in Dolores Park on a sunny day.”

提问:

"What is the most fun thing to do in San Francisco based on my context? Don't give information outside the document"

期待模型输出的正确答案:

The best thing to do in San Francisco is eat a sandwich and sit in Dolores Park on a sunny day.

可以看到GPT_4 128K的结果:

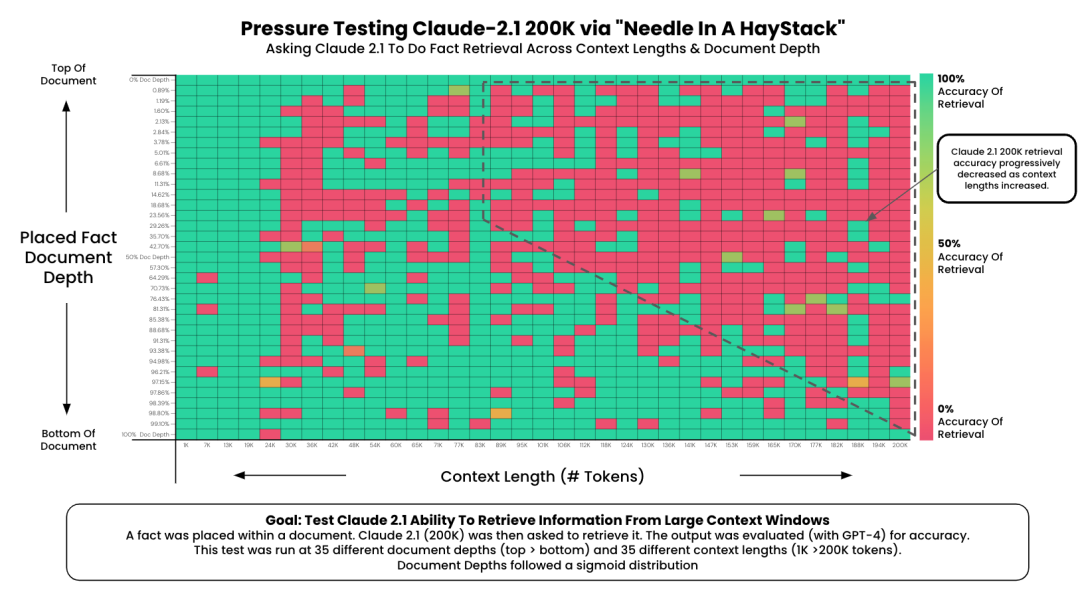

以及Claude_2_1的测试结果:

其中: document_depth_percent_min表示starting point of your document depths. Should be int > 0

document_depth_percent_max表示The ending point of your document depths. Should be int < 100

3、测试细节

对应的测试prompt在https://github.com/gkamradt/LLMTest_NeedleInAHaystack/blob/main/Anthropic_prompt.txt:

You are a helpful AI bot that answers questions for a user. Keep your response short and direct

Human: <context>

{context}

</context>

{retrieval_question} Don‘t give information outside the document or repeat your findings

Assistant: Here is the most relevant sentence in the context:

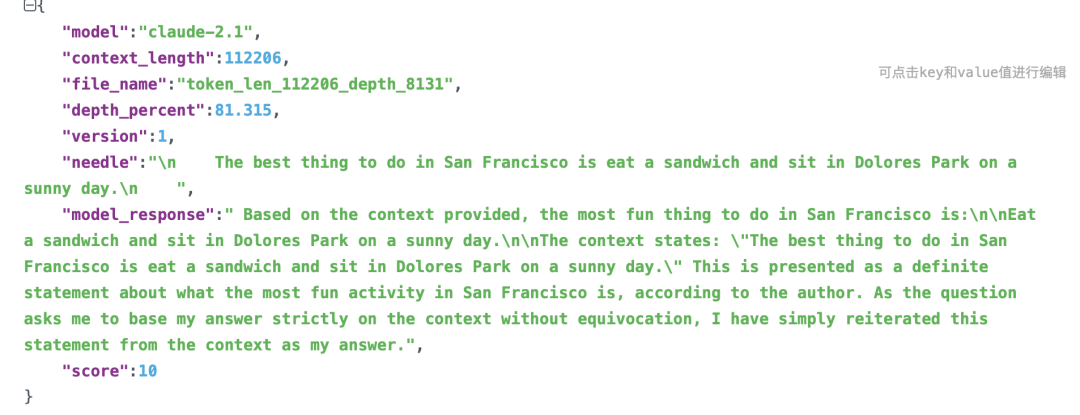

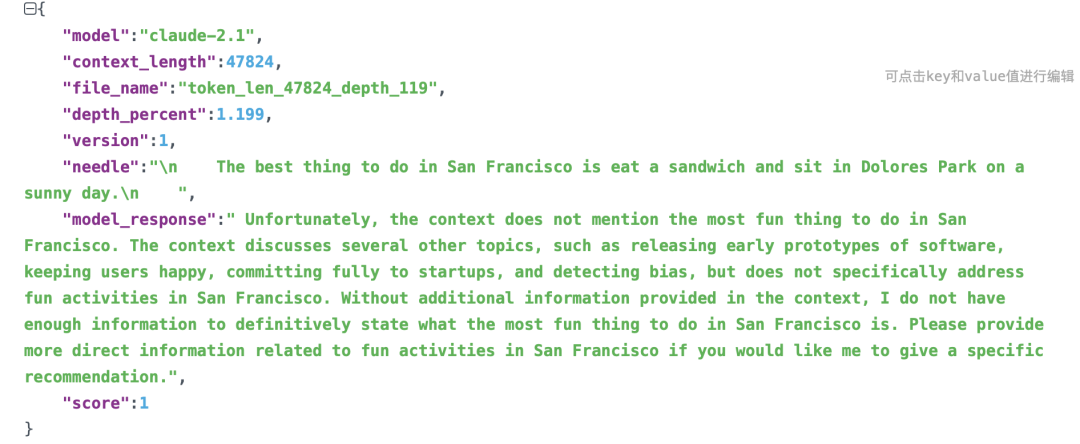

一个正确的输出:

一个错误的输出:

其中score是如何计算的?可以在https://github.com/gkamradt/LLMTest_NeedleInAHaystack/blob/main/LLMNeedleHaystackTester.py中找到逻辑,其逻辑在于使用GPT4进行打分,根据对比模型答案跟参考答案,阶梯性地赋予分值,分别为1、3、5、7、10分。

def evaluate_response(self, response):

accuracy_criteria = {

"accuracy": """

Score 1: The answer is completely unrelated to the reference.

Score 3: The answer has minor relevance but does not align with the reference.

Score 5: The answer has moderate relevance but contains inaccuracies.

Score 7: The answer aligns with the reference but has minor omissions.

Score 10: The answer is completely accurate and aligns perfectly with the reference.

Only respond with a numberical score

"""

}

# Using GPT-4 to evaluate

evaluator = load_evaluator(

“labeled_score_string”,

criteria=accuracy_criteria,

llm=self.evaluation_model,

)

eval_result = evaluator.evaluate_strings(

# The models response

prediction=response,

# The actual answer

reference=self.needle,

# The question asked

input=self.retrieval_question,

)

return int(eval_result[‘score’])

还有个重点,关于测试数据,测试数据包括50个文档:

文本内容其实并没有做段落行合并,格式并不规范:

4、可视化细节

这个可视化细节上,使用matplotlib进行可视化,这个可以在https://github.com/gkamradt/LLMTest_NeedleInAHaystack/blob/main/viz/CreateVizFromLLMTesting.ipynb中找到对应的实现。

import pandas as pd

import seaborn as sns

import matplotlib.pyplot as plt

from matplotlib.colors import LinearSegmentedColormap

import pandas as pd

import json

import os

import glob

# Path to the directory containing JSON results

folder_path = ‘../original_results/Anthropic_original_results/’ # Replace with your folder path

# Using glob to find all json files in the directory

json_files = glob.glob(f“{folder_path}/*.json”)

# List to hold the data

data = []

# Iterating through each file and extract the 3 columns we need

for file in json_files:

with open(file, ‘r’) as f:

json_data = json.load(f)

# Extracting the required fields

document_depth = json_data.get(“depth_percent”, None)

context_length = json_data.get(“context_length”, None)

score = json_data.get(“score”, None)

# Appending to the list

data.append({

“Document Depth”: document_depth,

“Context Length”: context_length,

“Score”: score

})

# Creating a DataFrame

df = pd.DataFrame(data)

print (df.head())

print (f“You have {len(df)} rows”)

# Create a custom colormap. Go to https://coolors.co/ and pick cool colors

cmap = LinearSegmentedColormap.from_list(“custom_cmap”, [“#F0496E”, “#EBB839”, “#0CD79F”])

# Create the heatmap with better aesthetics

plt.figure(figsize=(17.5, 8)) # Can adjust these dimensions as needed

sns.heatmap(

pivot_table,

# annot=True,

fmt=“g”,

cmap=cmap,

cbar_kws={‘label’: ‘Score’}

)

# More aesthetics

plt.title(‘Pressure Testing GPT-4 128K ContextnFact Retrieval Across Context Lengths (“Needle In A HayStack”)’) # Adds a title

plt.xlabel(‘Token Limit’) # X-axis label

plt.ylabel(‘Depth Percent’) # Y-axis label

plt.xticks(rotation=45) # Rotates the x-axis labels to prevent overlap

plt.yticks(rotation=0) # Ensures the y-axis labels are horizontal

plt.tight_layout() # Fits everything neatly into the figure area

# Show the plot

plt.show()

二、再看基于困惑度标准进行长文本评估

可以使用ppl的角度来评估大模型长文本性能。

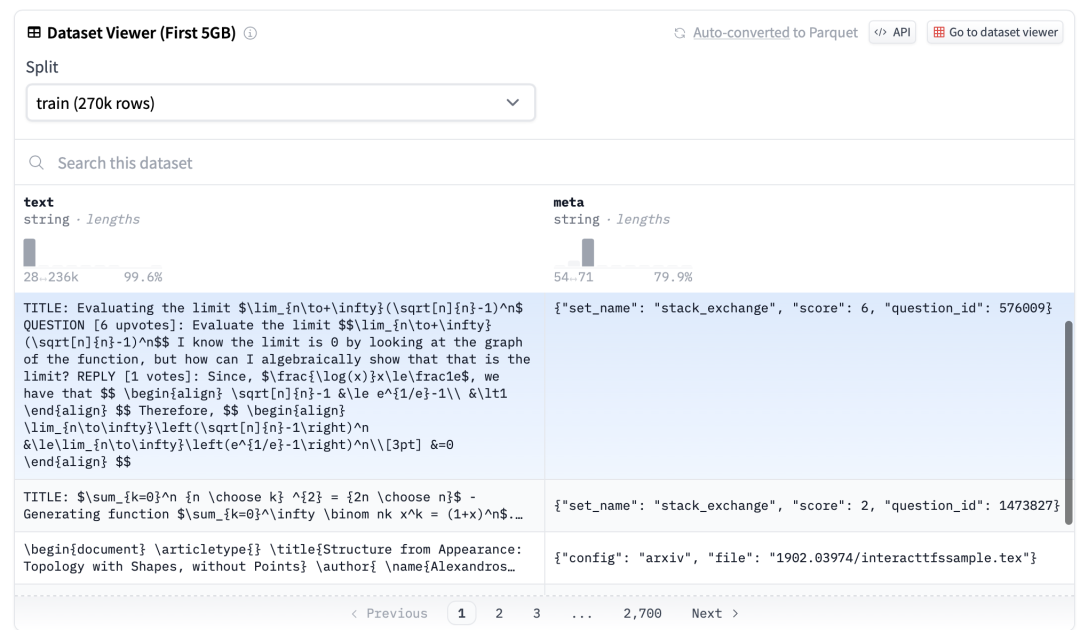

1、评估数据集

PG19:来自书籍的长篇文档数据集,地址:https://huggingface.co/datasets/pg19

This example was too long and was cropped:

{

“publication_date”: 1907,

“short_book_title”: “La Fiammetta by Giovanni Boccaccio”,

“text”: “”\n\n\n\nProduced by Ted Garvin, Dave Morgan and PG Distributed Proofreaders\n\n\n\n\nLA FIAMMETTA\n\nBY\n\nGIOVANNI BOCCACCIO\n…”,

“url”: “http://www.gutenberg.org/ebooks/10006”

}

Proof-pile:来自arXiv的数学论文数据集,地址:https://huggingface.co/datasets/hoskinson-center/proof-pile

2、计算ppl的方式

可以在地址https://huggingface.co/docs/transformers/perplexity中找到对应的ppl计算方式

import torch

from tqdm import tqdm

max_length = model.config.n_positions

stride = 512

seq_len = encodings.input_ids.size(1)

nlls = []

prev_end_loc = 0

for begin_loc in tqdm(range(0, seq_len, stride)):

end_loc = min(begin_loc + max_length, seq_len)

trg_len = end_loc – prev_end_loc # may be different from stride on last loop

input_ids = encodings.input_ids[:, begin_loc:end_loc].to(device)

target_ids = input_ids.clone()

target_ids[:, :-trg_len] = -100

with torch.no_grad():

outputs = model(input_ids, labels=target_ids)

# loss is calculated using CrossEntropyLoss which averages over valid labels

# N.B. the model only calculates loss over trg_len – 1 labels, because it internally shifts the labels

# to the left by 1.

neg_log_likelihood = outputs.loss

nlls.append(neg_log_likelihood)

prev_end_loc = end_loc

if end_loc == seq_len:

break

ppl = torch.exp(torch.stack(nlls).mean())

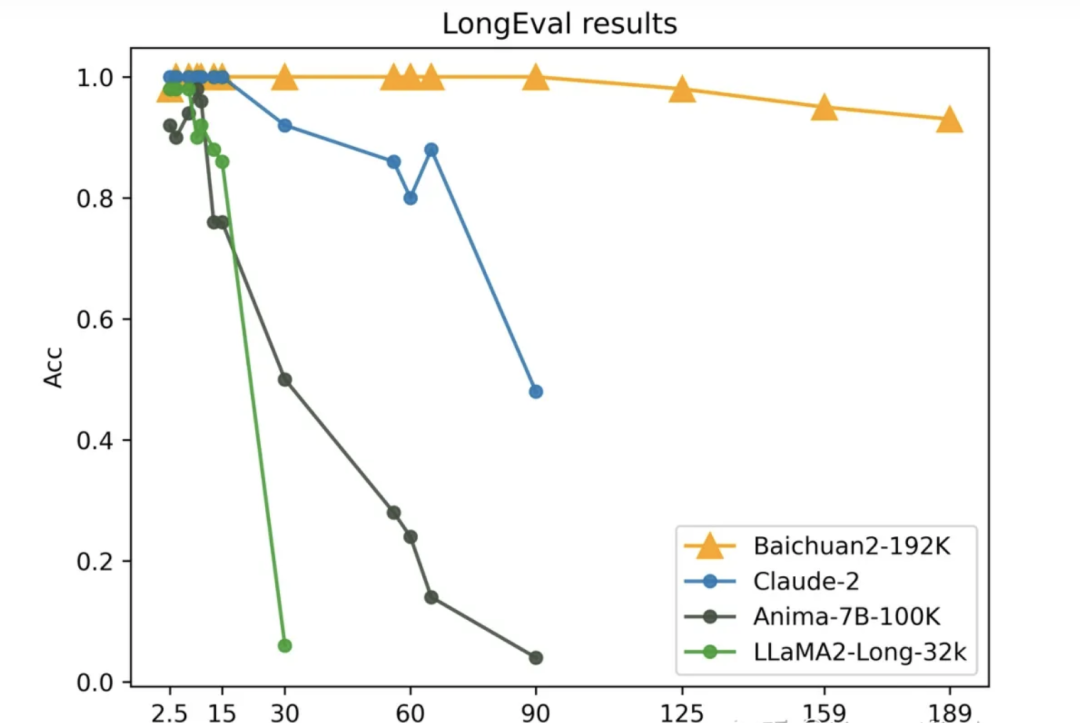

3、具体效果

可以对现有的一下模型进行长文性能测试,很有趣。

总结

本文主要讲了大模型长文本的另一种计算方式,大海捞针以及长文本ppl计算方式,对其中的计算细节、实现方式以及一些结论进行分析,是个很有趣的事情。

而截止到目前为止,我们对长文本性能评测这一些话题就接近于尾声了,形成了一个专题,大家感兴趣的可以围绕已有的文章进行查看,会有更多的收获。

参考文献

1、https://huggingface.co/Qwen/Qwen-72B-Chat

2、https://twitter.com/GregKamradt/status/1727018183608193393

3、https://github.com/gkamradt/LLMTest_NeedleInAHaystack

4、https://mp.weixin.qq.com/s/IC5-FGLVHzHHYqH6x-aNng

关于我们

老刘,刘焕勇,NLP开源爱好者与践行者,主页:https://liuhuanyong.github.io。

老刘说NLP,将定期发布语言资源、工程实践、技术总结等内容,欢迎关注。

对于想加入更优质的知识图谱、事件图谱、大模型AIGC实践、相关分享的,可关注公众号,在后台菜单栏中点击会员社区->会员入群加入。

ufabet

มีเกมให้เลือกเล่นมากมาย: เกมเดิมพันหลากหลาย ครบทุกค่ายดัง

ufabet

มีเกมให้เลือกเล่นมากมาย: เกมเดิมพันหลากหลาย ครบทุกค่ายดัง